Now this may begin to remind you of Boolean logic — you’re taking inputs of zeros and ones and sending out zeros and ones. In fact, except for the “carry 1” part of the last rule, this looks very much like the truth table for the XOR logical operator:

Input 1

Input 2

Output

0

0

0

0

1

1

1

0

1

1

1

0

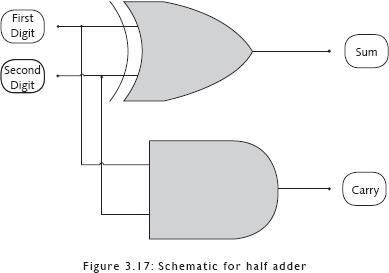

It turns out that if you put together certain logical operators in clever ways, you can completely replicate addition in binary, including the “carry 1” part. Here is a schematic for a “half adder”—built by combining an XOR operator and an AND operator — which takes in two single binary digits and outputs a sum and an optional digit to carry.

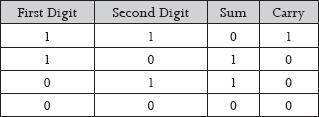

So the half adder would function as follows:

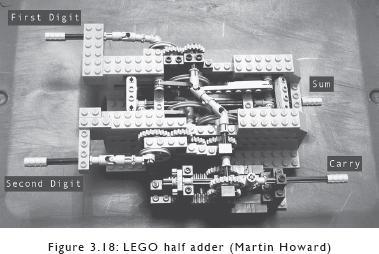

And since we can build logic gates — physical objects that replicate logical operations — we should be able to build a physical half adder. And, indeed, here is a LEGO half adder built by Martin Howard.

At last we have computation, which is — according to the Oxford English Dictionary (OED) — the “action or process of computing, reckoning, or counting; arithmetical or mathematical calculation.” A “computer” was originally, as the OED also tells us, a “person who makes calculations or computations; a calculator, a reckoner; spec. a person employed to make calculations in an observatory, in surveying, etc.” Charles Babbage set out to create a machine that would replace the vast throngs of human computers who worked out logarithmic and trigonometric tables; what we’ve sketched out above are the beginnings of a mechanism which can do exactly that and more. You can use the output of the half adder as input for other mechanisms, and also continue to add logic gates to it to perform more complex operations. You can hook up two half adders together and add an OR logic gate to make a “full adder,” which will accept two binary digits and also a carry digit as input, and will output a sum and a carry digit. You can then put together cascades of full adders to add binary numbers eight columns wide, or sixteen, or thirty-two. This adding machine “knows” nothing; it is just a clever arrangement of physical objects that can go from one state to another, and by doing so cause changes in other physical objects. The revolutionary difference between it and the first device that Charles Babbage built, the Difference Engine, is that it represents data and logic in zeros and ones, in discrete digits — it is “digital,” as opposed to the earlier “analog” devices, all the way back through slide rules and astrolabes and the Antikythera mechanism. Babbage’s planned second device, the Analytical Engine, would have been a digital, programmable computer, but the technology and engineering of his time was not able to implement what he had imagined.

Once you have objects that can materialize both Boolean algebra and binary numbers, you can connect these components in ways that allow the computation of mathematical functions. Line up sufficiently large numbers of simple on/off mechanisms, and you have a machine that can add, subtract, multiply, and through these mathematical operations format your epic novel in less time than you will take to finish reading this sentence. Computers can only compute, calculate; the poems you write, the pictures of your family, the music you listen to — all these are converted into binary numbers, sequences of ones and zeros, and are thus stored and changed and re-created. Your computer allows you to read, see, and hear by representing binary numbers as letters, images, and sounds. Computers may seem mysteriously active, weirdly alive, but they are mechanical devices like harvesting combines or sewing machines.

You can build logic gates out of any material that can accept inputs and switch between distinct states of output (current or no current, 1 or 0); there is nothing special about the chips inside your laptop that makes them essential to computing. Electrical circuits laid out in silicon just happen to be small, cheap, relatively reliable, and easy to produce in mass quantities. The Digi-Comp II, which was sold as a toy in the sixties, used an inclined wooden plane, plastic cams, and marbles to perform binary mathematical operations. The vast worlds inside online games provide virtual objects that can be made to interact predictably, and some people have used these objects to make computing machines inside the games — Jong89, the creator of the “Dwarven Computer” in the game Dwarf Fortress, used “672 [virtual water] pumps, 2000 [faux wooden] logs, 8500 mechanisms and thousands of other assort[ed] bits and knobs like doors and rock blocks” to put together his device, which is a fully functional computer that can perform any calculation that a “real” computer can.2 Logic gates have been built out of pneumatic, hydraulic, and optical devices, out of DNA, and flat sticks connected by rivets. Recently, some researchers from Kobe University in Japan announced, “We demonstrate that swarms of soldier crabs can implement logical gates when placed in a geometrically constrained environment.”3

Many years after I stopped working professionally as a programmer, I finally understood this, truly grokked this fact — that you can build a logic gate out of water and pipes and valves, no electricity needed, and from the interaction of these physical objects produce computation. The shock of the revelation turned me into a geek party bore. I arranged toothpicks on dinner tables to lay out logic-gate schematics, and harassed my friends with disquisitions about the life and work of George Boole. And as I tried to explain the mechanisms of digital computation, I realized that it is a process that is fundamentally foreign to our common-sense, everyday understanding. In his masterly book on the subject, Code: The Hidden Language of Computer Hardware and Software, Charles Petzold uses telegraphic relay circuits — built out of batteries and wires — to walk the lay reader through the functioning of computing machines. And he points out:

Samuel Morse had demonstrated his telegraph in 1844—ten years before the publication of Boole’s The Laws of Thought …

But nobody in the nineteenth century made the connection between the ANDs and ORs of Boolean algebra and the wiring of simple switches in series and in parallel. No mathematician, no electrician, no telegraph operator, nobody. Not even that icon of the computer revolution Charles Babbage (1792–1871), who had corresponded with Boole and knew his work, and who struggled for much of his life designing first a Difference Engine and then an Analytical Engine that a century later would be regarded as the precursors to modern computers …

Nobody in that century ever realized that Boolean expressions could be directly realized in electrical circuits. This equivalence wasn’t discovered until the 1930s, most notably by Claude Elwood Shannon … whose famous 1938 M.I.T. master’s thesis was entitled “A Symbolic Analysis of Relay and Switching Circuits.”4

After Shannon, early pioneers of modern computing had no choice but to comprehend that you could build Boolean logic and binary numbers into electrical circuits and work directly with this equivalence to produce computation. That is, early computers required that you wire the logic of your program into the machine. If you needed to solve a different problem, you had to build a whole new computer. General programmable computers, capable of receiving instructions to process varying kinds of logic, were first conceived of by Charles Babbage in 1837, and Lady Ada Byron wrote the first-ever computer program — which computed Bernoulli numbers — for this imaginary machine, but the technology of the era was incapable of building a working model.5 The first electronic programmable computers appeared in the nineteen forties. They required instructions in binary — to talk to a computer, you had to actually understand Boolean logic and binary numbers and the innards of the machine you were driving into action. Since then, decades of effort have constructed layer upon layer of translation between human and machine. The paradox is, quite simply, that modern high-level programming languages hide the internal structures of computers from programmers. This is how Rob P. can acquire an advanced degree in computer science and still be capable of that plaintive, boldfaced cry, “But I Don’t Know How Computers Work!”6